Bioinformatics

In the past, common strategy to deal with the large data sets was the acquisition of powerful servers with high processing capacity. In a present day, due to rapid development of technology, any server will soon become dwarfed by just an ordinary PC. Therefore, the approach to this problem is shifted towards connecting a mass of standard computers into cluster whose performance is superior to a single powerful computer. Constant and rapid development of molecular genetic technology causes frequent shift in the approach to the research in the field of life sciences. Among other, development of new techniques speeds up data generation, thus setting up challenging demands in data processing, regards the quality control and analysis of big data sets. Meeting these ever-increasing demands requires the application of robust, efficient and fast-operating statistical tools. In order to achieve a successful collaboration between five self-contained institutions, dislocated resources will be connected by establishing the collaborative repository for data storage and information exchange. High computational demands have already created the need to relocate more demanding data processing operations to the “statistical” server acquired by University of Zagreb, Faculty of Agriculture, and recently, to University Computing Centre (SRCE) “Isabella” computational cluster. For further improvement of communication and information exchange, it is necessary to develop the user-friendly interface, as a gateway for an easy access to data queries and result reports. The main platform for data processing will be the “Isabella” cluster, because its availability and continuous expansion guarantee the full coverage of our needs.

Major objectives of the activities that will be carried out are the following:

- Set up a cloud storage as a collaborative repository for data collection and information exchange between five institutions involved in the project,

- Relocate the data management and analysis processes from servers and PCs to computer clusters, in order to comply with the increasing demands for computing power when handling big data sets.

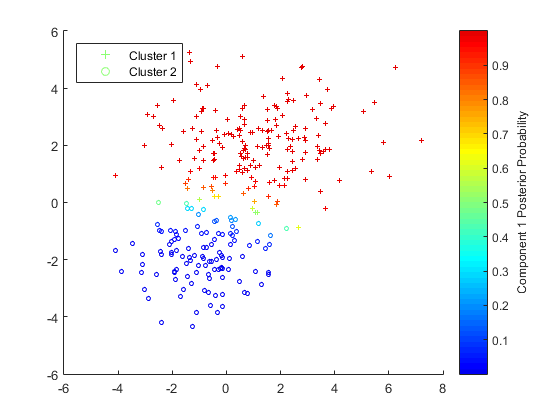

- Implement novel statistical methodology.